“Innovation Engineering” is a great influence on the growth and survival of today’s world. As a method for solving technology and business problems to innovate, adapt, and enter new markets using the expertise in emerging technologies, it is an essential tool for creative minds.

Much like every other industry in the world, video game development industry is open to new ideas and products more than ever. Actually, it has never been more critical. New technologies emerge, as do new business models. Thanks to innovation culture, video game development process has become more than classic software engineering by evolving into modern innovation engineering.

The latest release of The Occult is a meticulous combination of software and innovation engineering. In order to maximize productivity and efficiency, outside the box thinking was vital. I had to come up with creative and unorthodox problem solving methods. So, rather than waiting for a-ha! moments, I relied on cross-disciplinary research and practices for creativity, and classic engineering methodology for productivity; simply harnessing the best of both worlds… And it worked, charmingly!

The Occult version 2022.2 is released!

The Occult, a virtual gameplay programming ecosystem for Unreal Engine, is now available for both Intel and ARM architectures by offering improved cross-platform compatibility, exciting new features, enhancements, and a few bug fixes. As usual, all commercial and personal video game development projects that I am currently involved in will benefit from the new/enhanced features available in this release.

New Architecture

Multiprocessing System Architecture: User-defined number of virtual processors can perform sync/async operations at the same time. Multiprocessing read/write operations on shared code/memory are 100% thread-safe at both virtual and physical access level.

Multiple Stacks for Multiple Processors: A private stack is assigned for each vCPU, and a public stack is shared among all processors. The public stack is mainly used for level-scope variables, and can be accessed via Index/Data bus. Contrary to the public stack, private stacks do not use Occult’s bus architecture anymore. They are hardwired to parent vCPUs for exceptional low-latency access!

Virtual/Native Code Switching: Instant code switching at instruction-precision level of detail creates an opportunity to inject native C++ code into virtual Assembly code and vice versa, while creating endless possibilities for hardcore code optimization.

New Features

-

-

- Stack based smart serialization.

- User-defined Trigger algorithm injection architecture.

- User-defined Pivot lookup table architecture.

- CCR driven stack error report mechanism.

- Blazingly fast 32-bit text/numerical Tag driven instance management.

Enhancements

-

-

- All coprocessors (Trigger, Flagger, Pooler) are enhanced to take advantage of new multiprocessing system.

- New speed/size optimized Flagger node graph search algorithm is written in 92 bytes (yes, ninety-two!) using inline Assembly; small enough to flatter physical cache.

- Enhanced native Intel architecture support for PC.

- Enhanced native ARM architecture support for Apple Silicon Macs and Nintendo Switch.

Improved Game Executable Compatibility

-

-

- Windows 10 – (21H2)

- Windows 11 – (22H2)

- macOS Big Sur 11.7 – (Intel/Apple Silicon)

- macOS Monterey 12.6 – (Apple Silicon only)

- iOS 14

- iOS 15

- iOS 16

- Nintendo Switch

Improved C++ Code Compatibility

-

-

- Unreal Engine 4 – (4.26.2, 4.27.2)

- Unreal Engine 5 – (5.0.3)

Improved C++ Development Environment Compatibility

-

-

- Microsoft Visual Studio 2019 version 16.11.19

- Microsoft Visual Studio 2022 version 17.3.5

- Apple Xcode version 14.0.1

- JetBrains Rider 2022.2.3

Bug Fixes

-

-

- Macro driven Load/Save settings filename obfuscation.

- Immediate Operand format standardization.

- Trigger Smart 2-Way OnFound/OnNotFound exception implementation.

Availability: The Occult is

not available for public use. It is a proprietary software.

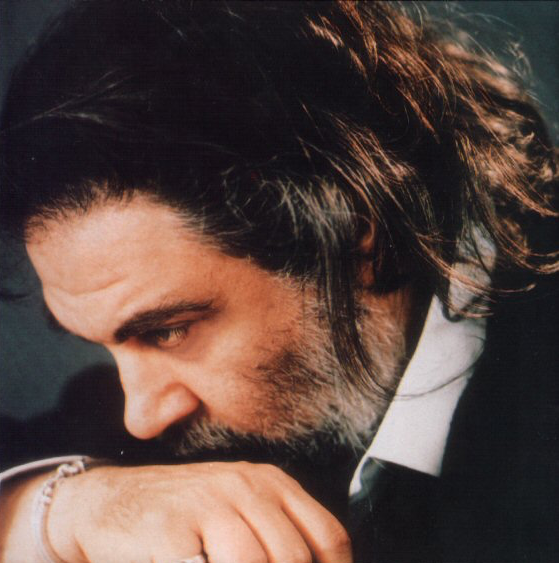

Copyright Notice: The Occult VM architecture and all related tools are conceived, designed, implemented and owned by Mert Börü. The Occult logo is designed and crafted by Tuncay Talayman.

Credo quia absurdum!

Credo quia absurdum!